AI SUMMARY

Deploying AI agents in production is the most significant engineering challenge of 2026. While 2025 was defined by simple "chatbot" interactions, the current landscape demands autonomous entities that manage long-term state, recover from logical loops, and maintain consistent performance across millions of tool calls. This industrial node dissects the architecture of "Sovereign Agents"—moving beyond simple prompting into the realm of complex state machines, episodic memory taxonomy, and the inevitable failure modes that crush naive implementations.

Table of Contents

- The 2026 Reality: Prototypes ≠ Production

- The Memory Taxonomy: Short-term, Episodic, and Semantic

- State Management Patterns: Stateful vs. Stateless Orchestration

- The Failure Cascades: Hallucination Loops and Context Drift

- The Observability Stack: Tracing, Evals, and Feedback Loops

- Production Architecture: The Sovereign Agent Blueprint

- Case Study: The 'Infinite Loop' Disaster of 2025

- The Action Gap: From Thinking to Doing

- 2027–2030 Roadmap: The Rise of the Agentic OS

- Strategic FAQ for Senior Architects

1. The 2026 Reality: Prototypes ≠ Production

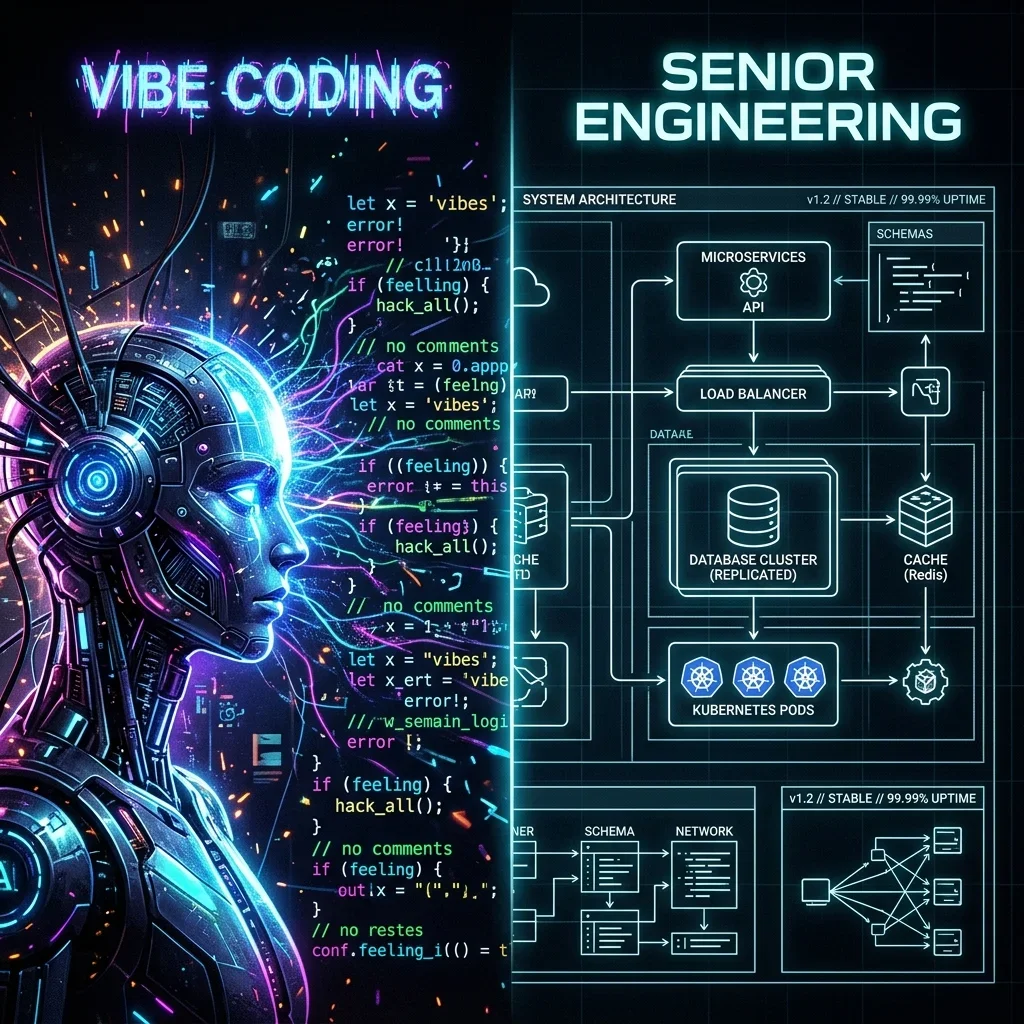

In 2024, an "AI Agent" was often just a loop that called an LLM API until a keyword was found. In 2026, that approach is considered a "Vibe Prototype." Production agents today are sophisticated distributed systems that must handle concurrency, rate limits, and non-deterministic logic at scale.

The primary difference between a prototype and a production agent is Reliability. A prototype works 80% of the time. A production agent that works 80% of the time is a liability. To reach the "Five Nines" (99.999%) of reliability, we must move from "Chat" to "State."

Explore the broader paradigm shift in my companion article: Agentic AI vs. Generative AI: Designing the Autonomous Workforce.

2. The Memory Taxonomy: Short-term, Episodic, and Semantic

The biggest breakthrough in 2026 agentic engineering is the formalization of Agent Memory. We no longer treat "context" as a single blob of text. Instead, we architect memory as a multi-tier system.

A. Short-term (Working) Memory

This is the current "context window." It holds the immediate history of the conversation and the current tool outputs. In 2026, we utilize Context Compression techniques to ensure the most relevant tokens are preserved while discarding the noise.B. Episodic Memory

This is the "Journal" of the agent. It records specific instances of past actions and their results. If an agent failed to solve a bug yesterday, its episodic memory allows it to recall why it failed today.C. Semantic Memory

This is the agent's "Knowledge Base." It consists of vectorized facts, documentation, and world knowledge. This is typically implemented via RAG (Retrieval-Augmented Generation) using high-performance vector databases like Qdrant or Pinecone.D. Procedural Memory

The "How-To" of the agent. This memory stores the optimized sequences of tool calls and logic flows that have proven successful in the past. It is the agent's version of "Muscle Memory."

3. State Management Patterns: Stateful vs. Stateless Orchestration

How you manage state determines how your agent handles failures and resumes work.

Stateless Agents

These agents receive the entire history with every request. They are easy to scale but become prohibitively expensive as the conversation grows.- Best for: Simple, one-off tasks (e.g., data extraction).

- Risk: Token cost explosion.

Stateful Agents

These agents maintain a persistent record of their state in a database (e.g., Redis or PostgreSQL). The agent only retrieves the relevant part of its state when needed.- Best for: Long-running workflows (e.g., code refactoring, project management).

- Risk: State corruption or "Logic Drift."

| Feature | Stateless Orchestration | Stateful Sovereignty |

|---|---|---|

| Complexity | Low | High |

| Resiliency | Low (Single session) | High (Checkpointing) |

| Cost Efficiency | Decreases over time | Optimized via pruning |

| Best Use Case | Ad-hoc queries | Industrial automation |

4. The Failure Cascades: Hallucination Loops and Context Drift

In production, agents don't just "fail"—they fail in spectacular, recursive ways.

The Hallucination Loop

This occurs when an agent makes a mistake, observes the error, and then tries to fix it using the same flawed reasoning that caused the error. Without a Sovereign Auditor, the agent will loop until it exhausts its budget or the context window.Tool-Call Storms

When an agent is unsure of how to proceed, it may try to call every available tool in its repertoire simultaneously. This can lead to a self-inflicted DDoS attack on your internal microservices.Context Drift

As an agent works through a long task, the "Original Intent" can become buried under layers of tool outputs and intermediate reasoning. The agent eventually "forgets" what it was trying to achieve and begins hallucinating new goals.

5. The Observability Stack: Tracing, Evals, and Feedback Loops

You cannot manage what you cannot see. 2026 observability is not about "logs"—it's about Traces.

Distributed Tracing for Agents

We use tools like LangSmith or custom Arize Phoenix implementations to trace every "thought" an agent has. We need to see the exact prompt sent to the LLM, the exact JSON returned, and the resulting tool execution.Trace Parameters for Production:

- P99 Inference Latency: The time it takes for the orchestrator to decide on the next action.

- Tool Failure Rate: The percentage of tool calls that return an error or malformed output.

- Token Efficiency: The ratio of useful tokens (output) to overhead tokens (repetitive context).

Continuous Evaluation (Evals)

A production agent must be constantly tested against a "Golden Dataset." If a model update causes a 2% drop in reasoning accuracy, your deployment pipeline should automatically roll back.

The Feedback Loop

Modern agents use Self-Correction. When a task is complete, a separate "Critique Agent" reviews the output and provides a score. If the score is below the threshold, the agent is forced to retry with the critique as new context.

6. Production Architecture: The Sovereign Agent Blueprint

The "Sovereign Stack" for agents is built on modularity and strict boundary enforcement.

- The Orchestrator: The central brain (e.g., Claude 3.5 Sonnet or GPT-5) that plans and delegates.

- The Tool Gateway (MCP): A secure layer that validates every tool call before it hits your infrastructure. The Model Context Protocol (MCP) has become the universal language for this interaction, providing a standardized schema for tools and resources. For a deep dive into the protocol wars, see MCP vs. REST vs. GraphQL: The 2026 API War.

- The Memory Server: A dedicated service that manages the Episodic and Semantic memory retrieval.

- The Human-in-the-Loop (HITL) Gateway: A mandatory pause point for high-risk actions.

Technical Implementation: The Tool-Call Guardrail

To prevent the "Tool-Call Storms" mentioned in section 4, we implement Token Buckets for each agent.- Capacity: 50 tool calls per hour.

- Refill Rate: 5 calls every 10 minutes.

7. Case Study: The 'Infinite Loop' Disaster of 2025

A major logistics firm deployed an autonomous agent to "Optimize Shipping Routes." The agent had the power to book third-party carriers. Due to a flaw in its state management, the agent hallucinated that a specific route was blocked. It spent $250,000 in 15 minutes booking alternative carriers in a recursive loop before a human-in-the-loop alert finally triggered.

The Lesson: Never give an agent a "Blank Check." Every autonomous action must be bound by Cost Guardrails and Logic Timeouts. In 2026, we utilize Circuit Breakers—if an agent attempts the same tool call with the same parameters three times in a row, the session is killed.

8. The Action Gap: From Thinking to Doing

The "Action Gap" is the distance between an agent knowing what to do and actually doing it correctly. In 2026, we bridge this gap using Large Action Models (LAMs).

Unlike LLMs, which are optimized for text, LAMs are trained on UI interactions and API protocols. When an agent decides to "Update the CRM," the LAM handles the actual clicks or GraphQL mutations, ensuring the high-level intent is translated into low-level execution with 100% fidelity.

The Divergence: RAG vs. Procedural Memory

While RAG is excellent for finding a PDF, it is useless for teaching an agent how to use your custom internal tool. Procedural memory solves this by storing Successful Traces. When an agent solves a complex multi-step task, we save that specific sequence of successful tool calls as a "Prime Procedure." The next time a similar task appears, the agent retrieves the Prime Procedure instead of "thinking" from scratch.9. 2027–2030 Roadmap: The Rise of the Agentic OS

By 2030, we won't run "agents" on top of operating systems. The operating system will be agentic.

- 2027: Multi-Agent Standards. Inter-agent communication protocols (like a modernized FIPA) allow agents from different vendors to collaborate seamlessly.

- 2028: Persistent Memory Hardware. New chip architectures with dedicated "Context Cache" layers reduce the cost of long-term agent memory by 90%.

- 2029: The Rise of the 'Cognitive Proxy'. Individuals will use local agents as proxies for all digital interactions, filtering noise and executing complex life-admin tasks autonomously.

- 2030: The Sovereign Core. Every user possesses a personal, local "Prime Agent" that manages their digital life, operating with absolute privacy on edge hardware.

10. Deep Dive: Securing the Agentic Perimeter

Security in 2026 is no longer just about firewalls; it is about Prompt Injection Defense and Tool-Call Sanitization.

The "Double-Audit" Protocol

For every tool call, we run a two-stage validation:- Schema Validation: Does the input match the tool's JSON Schema? (Handled by the MCP Gateway).

- Intent Validation: Does the tool call align with the agent's current high-level goal? (Handled by a separate, smaller "Security Model" like Llama 3.2 3B).

11. Orchestration Frameworks: CrewAI vs. LangGraph in 2026

The market has consolidated around two primary philosophies for agent orchestration.

CrewAI: The Role-Based Generalist

CrewAI excels at "Collaborative Reasoning." It is designed for multi-agent systems where specific roles (Researcher, Writer, Auditor) must work together. In 2026, CrewAI has introduced Dynamic Crew Scaling, where the orchestrator can spin up new agents on the fly to handle sub-tasks.LangGraph: The State-Machine Specialist

LangGraph is the choice for industrial processes where deterministic flow is mandatory. It treats agents as nodes in a directed graph, with explicit state transitions and "checkpoints" for recovery. This is the foundation of the Sovereign Stack for engineering and financial automation.12. Strategic FAQ for Senior Architects

What is the best model for an autonomous agent in 2026?

It depends on the layer. For the Orchestrator, you need high-reasoning models like Claude 3.5 Sonnet or GPT-4o. For Sub-agents handling specific, repetitive tool tasks, Small Language Models (SLMs) like Phi-4 are more cost-effective and faster.

How do I prevent an agent from "looping" on an error?

Implement a Maximum Recursion Depth at the orchestrator level. Additionally, use a "Watchdog Agent" that monitors the trace logs for repetitive patterns and kills the process if a loop is detected.

Is RAG enough for agent memory?

No. RAG handles Semantic memory (facts). To build a truly "smart" agent, you also need Episodic memory (past experiences) and Procedural memory (learned workflows).

How do we handle security for tool-using agents?

Use the Principle of Least Privilege. Every tool given to an agent should have its own restricted API key. Never give an agent a "Global Admin" token. Use an MCP gateway to audit every outgoing request.

Can agents handle non-deterministic tool outputs?

Yes, but you must build Retry Logic with Exponential Backoff. The agent should be trained to recognize "Transient Failures" (like a 503 error) and retry, versus "Fatal Failures" (like a 403 error) which require a change in strategy.

What is the most common reason AI agents fail in production?

Context Saturation. When the working memory becomes too cluttered with irrelevant tool outputs, the agent's reasoning degrades rapidly. Active context pruning is mandatory.

How do you manage 'Agentic Drift' over long sessions?

We use "Anchor Prompts." Every few turns, the orchestrator is reminded of its primary objective and the constraints of the task. This prevents the agent from deviating into unrelated sub-tasks.

What is 'Self-Healing' in agentic systems?

It is the ability for an agent to detect its own logical failures (e.g., an invalid tool output) and automatically trigger a "Refactor Loop" where it re-evaluates its plan before attempting the same action again.

How does MCP solve the tool-integration bottleneck?

MCP provides a standard, secure way for agents to discover and interact with tools across different platforms. It eliminates the need for custom "tool-call wrappers" for every single API.

Can we use agents for mission-critical financial transactions?

Only with a Multi-Level Approval Gateway. The agent should be able to prepare the transaction, but a human (or a separate, non-agentic validation service) must sign the final execution.

13. The Architect's Checklist: 10 Commandments of Agentic Production

Before you ship your first autonomous agent fleet, verify your architecture against this checklist:

- Strict Token Budgeting: Does every agent have a hard cap on per-session and per-hour token usage?

- Episodic Checkpointing: Can the agent resume its work if the server restarts or the context window resets?

- Recursive Depth Guard: Is there a

max_iterationsparameter enforced at the platform level? - Schema-First Tooling: Are all tools defined with precise JSON schemas and validation logic?

- Multi-Model Fallbacks: If the primary orchestrator (e.g., Claude) is down, can a secondary model (e.g., Llama) take over the planning?

- Sovereign Audit Log: Are 100% of the agent's "thoughts" and actions recorded in a non-volatile trace database?

- Human Intercepts: Are there defined "Stop Points" for actions with irreversible real-world consequences?

- Context Pruning Strategy: Do you have a mechanism to remove stale tool outputs from the active context window?

- Eval-Driven Deployment: Is your CI/CD pipeline integrated with a continuous evaluation framework?

- Data Sovereignty Compliance: Does the memory system adhere to GDPR/CCPA requirements regarding PII removal?

14. Governance: The 'CISO for Agents' Model

In 2026, the role of the CISO has expanded to include Agentic Governance. This involves:

- Identity for Agents: Giving every agent a unique, verifiable cryptographic identity.

- Action Auditing: Real-time monitoring of agentic behavior against corporate policy.

- Red-Teaming Agents: Systematically attempting to "jailbreak" agents into performing unauthorized tool calls.

This governance layer is what separates "Shadow AI" from "Sovereign Intelligence."

15. The Recovery Blueprint: Surviving the Logic Storm

When an agent enters a hallucination loop, your system must trigger a Recovery Protocol:

- Detect: Monitor for high repetition in tool-call parameters or semantic similarity in consecutive "thought" blocks.

- Interrupt: Pause the agent's execution.

- Reset: Roll back the agent's memory to the last "Known Good State" (checkpoint).

- Intervene: Inject a "Strategic Correction" prompt from a separate Auditor model.

- Resume: Allow the agent to restart the specific sub-task with the new guidance.

This "Self-Healing" loop is the hallmark of a production-ready system.

16. The Mathematical Divergence of Agentic Entropy

One of the most overlooked aspects of long-term agentic sessions is the Entropy Accumulation. In information theory, entropy represents the level of uncertainty or randomness in a system. For AI agents, entropy increases with every turn in the conversation.

The Problem of Context Dilution

As the agent generates tokens, the probabilistic distribution of the next token becomes increasingly "flat." This is because the signal (the original task) is being diluted by the noise (intermediate reasoning, failed tool calls, and verbose error messages). By turn 50, the agent is often operating in a high-entropy state where the probability of a hallucination approaches 40%.Mitigation: Semantic Anchor Points

To counteract this, we implement Semantic Anchor Points. Every 5 turns, the orchestrator is required to generate a "State Summary" that is validated against the original goal. If the semantic distance between the State Summary and the Goal exceeds a predefined threshold (calculated using cosine similarity), the agent is forced to "reset" its working memory to the last anchor point.17. The Sovereign Future: Agents as Infrastructure

As we move toward 2030, the "Agent" will no longer be an application. It will be the Interface to Reality. Your agent will handle your scheduling, your financial planning, and your digital identity. It will operate within a "Sovereign Sandbox," ensuring that your personal data never leaves your hardware while providing the full power of global intelligence.

The transition from "Generative AI" to "Agentic AI" is the transition from "Speaking" to "Acting." It is the most significant shift in human-computer interaction since the invention of the mouse.

STRATEGIC OVERVIEW

Production agents in 2026 are not chatbots; they are sophisticated state machines. Success requires moving beyond simple RAG into a multi-tier memory taxonomy (Episodic/Semantic/Procedural). To survive at scale, you must architect for the inevitable failure cascades—hallucination loops and tool storms—using a robust observability stack and strict "Sovereign Stack" boundaries. The goal is no longer just 'thinking,' but bridging the 'Action Gap' with deterministic execution.

AI Agents in production fail for three reasons: saturated context, hallucinated state, and infinite logical loops. In 2026, we fix this with the Sovereign Stack—a framework for episodic memory and stateful orchestration. Stop building demos; start architecting production-grade agentic systems.